Designing AI Product Systems

How I structure AI-enabled products so complexity stays controlled and user trust remains intact.

Challenge

AI introduces variability into product behaviour.

Without clear boundaries, this can lead to:

Ambiguous state transitions

Silent automation

Reduced user trust

Poor observability

The risk is not that AI generates different output.

The risk is that the surrounding system becomes unclear.

Approach

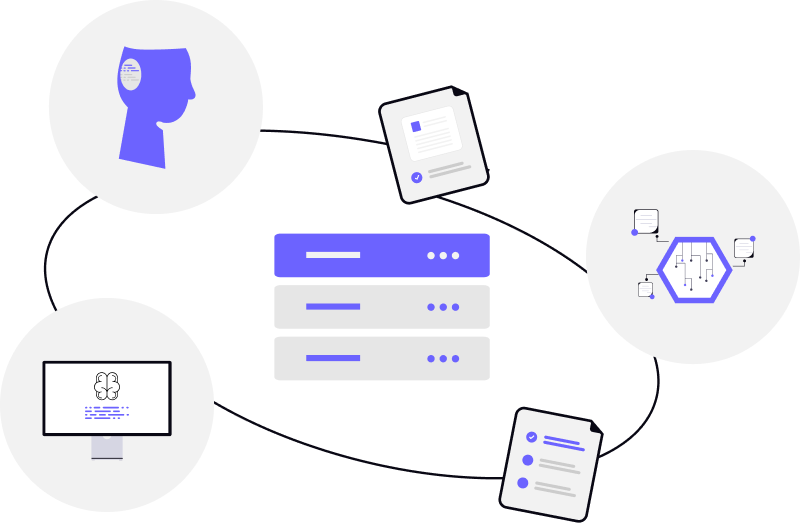

I design deterministic structure around probabilistic output.

That includes:

Explicit state modelling (Draft → Review → Confirm → Execute)

Clear separation between suggestion and execution

Guardrail definition for high-risk actions

Human approval checkpoints

Designed recovery paths

Structured behavioural instrumentation

AI output may vary.

System behaviour should not.

Outcome

The result is AI-enabled products that remain:

Clear in their behaviour

Controlled in their execution

Measurable in their adoption

Recoverable when failure occurs

Users understand what the system is doing.

Teams can observe how it behaves.

Trust scales alongside capability.

Full documentation and working examples available in my public repository →

View AI Product Systems on GitHub